Field Note / c-13

LangChain vs CrewAI vs Building from Scratch — My Experience

Over the past year, I've built 3 projects with LangChain, 2 with CrewAI, and one agent system from scratch without any...

LangChain vs CrewAI vs Building from Scratch — My Experience

Introduction

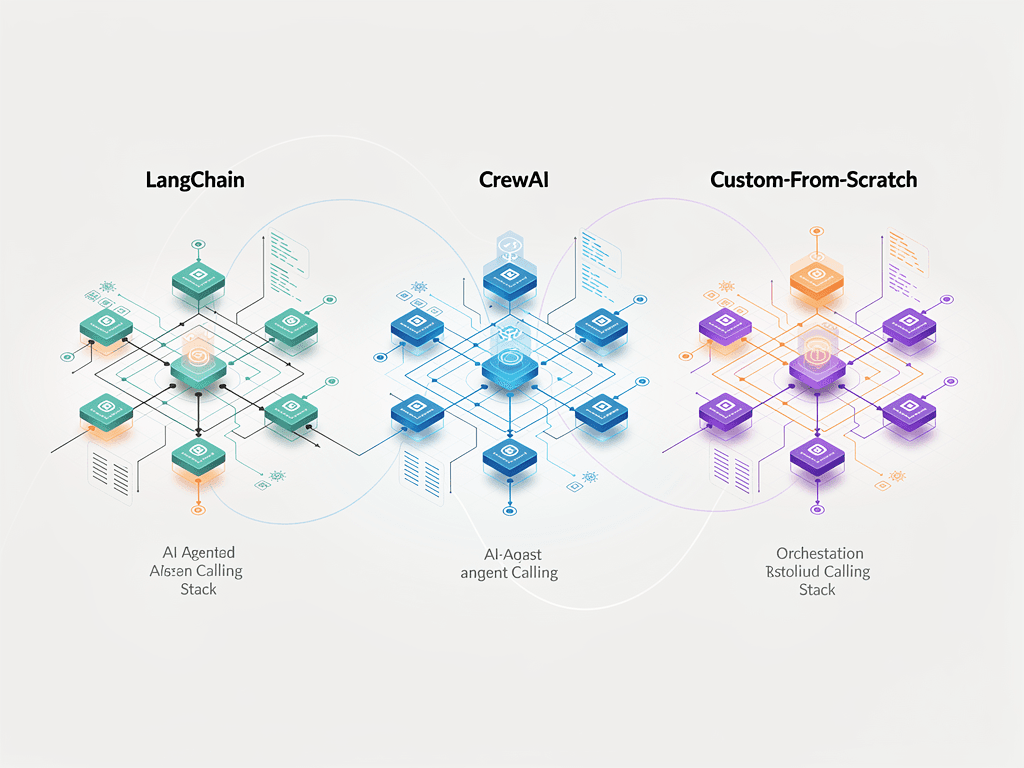

Over the past year, I've built 3 projects with LangChain, 2 with CrewAI, and one agent system from scratch without any framework. My conclusion might surprise you: heavier frameworks aren't always better, but going completely frameworkless isn't always best either — what matters is what stage your project is at and what technical capabilities your team has.

The Problem

In 2026, LangChain (including LangGraph) and CrewAI are the two most active players in the AI agent framework market. LangChain has over 47 million monthly downloads on PyPI, making it the largest ecosystem. CrewAI is the fastest-growing, especially for multi-agent scenarios.

But there's a third path: building from scratch. Calling the Claude or GPT API directly, writing your own agent loop, tool invocation, and state management. It sounds complex, but in certain scenarios it's actually the most efficient choice.

I've taken all three approaches on real projects, and this article shares the actual comparison data and selection guidance.

Core Comparison

LangChain + LangGraph

LangChain started in 2023 as an agent framework and by 2026 had evolved into LangGraph — a directed-graph-based agent orchestration engine. The core idea: you represent each processing step as a Node, and define the transitions between steps as Edges.

from langgraph.graph import StateGraph, MessagesState, START, END

from langchain_anthropic import ChatAnthropic

from langchain_core.tools import tool

# Define tools

@tool

def search_database(query: str) -> str:

"""Search the product database"""

# Actual database query logic

return f"Found 3 results for '{query}'"

@tool

def calculate_price(product_id: str, quantity: int) -> str:

"""Calculate total price for a product"""

# Actual price calculation logic

prices = {"SKU001": 99.0, "SKU002": 149.0}

total = prices.get(product_id, 0) * quantity

return f"Total: ¥{total}"

# Initialize the model

model = ChatAnthropic(

model="claude-sonnet-4-5-20250514"

).bind_tools([search_database, calculate_price])

# Define graph nodes

def call_model(state: MessagesState):

response = model.invoke(state["messages"])

return {"messages": [response]}

def call_tools(state: MessagesState):

"""Execute tool calls"""

last_message = state["messages"][-1]

results = []

for tool_call in last_message.tool_calls:

if tool_call["name"] == "search_database":

result = search_database.invoke(tool_call["args"])

elif tool_call["name"] == "calculate_price":

result = calculate_price.invoke(tool_call["args"])

results.append({"role": "tool", "content": result, "tool_call_id": tool_call["id"]})

return {"messages": results}

# Build the graph

def should_continue(state: MessagesState):

last = state["messages"][-1]

if hasattr(last, "tool_calls") and last.tool_calls:

return "tools"

return END

graph = StateGraph(MessagesState)

graph.add_node("agent", call_model)

graph.add_node("tools", call_tools)

graph.add_edge(START, "agent")

graph.add_conditional_edges("agent", should_continue, {"tools": "tools", END: END})

graph.add_edge("tools", "agent")

app = graph.compile()

LangGraph's strengths:

- Clean state management — every step's input and output is explicitly defined

- Supports loops and conditional branching — suited for complex agent logic

- Built-in checkpoint mechanism — can persist intermediate state and support breakpoint recovery

- Large community ecosystem — plenty of integrations and example code

LangGraph's problems:

- Too many abstraction layers — a simple tool call passes through LangChain Core, LangGraph, and the Provider, three layers of wrapping

- Iterates too fast — my code from last year won't run this year due to frequent breaking API changes

- Debugging is painful — error messages often point to framework internals, making it hard to locate the real issue

CrewAI

CrewAI's core abstraction is "roles." You don't need to think about nodes and edges — just define who does what:

from crewai import Agent, Task, Crew, Process

# Define agents (by role)

researcher = Agent(

role="Market Researcher",

goal="Collect competitor pricing, features, and user review data",

backstory="You are a seasoned market analyst skilled at extracting key insights from public information",

llm="anthropic/claude-sonnet-4-5-20250514",

verbose=True

)

writer = Agent(

role="Content Writer",

goal="Write an analysis report based on research data",

backstory="You are a technical content expert skilled at turning complex information into highly readable articles",

llm="anthropic/claude-sonnet-4-5-20250514",

verbose=True

)

reviewer = Agent(

role="Quality Reviewer",

goal="Ensure accuracy and readability of the report",

backstory="You are a rigorous editor who focuses on data accuracy and logical consistency",

llm="anthropic/claude-haiku-4-5-20250514", # Use a cheaper model for review

verbose=True

)

# Define tasks

research_task = Task(

description="Research the latest pricing and core features of these competitors: {products}",

expected_output="Structured competitor analysis data (JSON format)",

agent=researcher

)

writing_task = Task(

description="Based on the research data, write a 2000-word competitor analysis report",

expected_output="Markdown-formatted analysis report",

agent=writer,

context=[research_task] # Depends on research task output

)

review_task = Task(

description="Review the report quality, checking data accuracy and logical consistency",

expected_output="Review comments and revision suggestions",

agent=reviewer,

context=[writing_task]

)

# Assemble the crew and execute

crew = Crew(

agents=[researcher, writer, reviewer],

tasks=[research_task, writing_task, review_task],

process=Process.sequential, # Sequential execution

verbose=True

)

result = crew.kickoff(inputs={"products": "ChatGPT, Claude, Gemini"})

CrewAI's strengths:

- Intuitive abstraction — the role + task model matches how people think

- Quick to learn — you can have a multi-agent flow running in 30 minutes

- Built-in inter-agent communication — no manual data passing required

CrewAI's problems:

- Still depends on LangChain under the hood — CrewAI is built on LangChain's foundation, inheriting some of its complexity

- Limited flexibility — complex conditional branching and loops are hard to implement

- Lacks production features — no built-in error retries, rate limiting, or monitoring

Building from Scratch

When you need full control over agent behavior, or your project logic is simple enough that framework abstractions aren't needed, writing it yourself is the most efficient path:

import anthropic

from dataclasses import dataclass

@dataclass

class AgentConfig:

name: str

system_prompt: str

model: str = "claude-sonnet-4-5-20250514"

max_tokens: int = 4096

class SimpleAgent:

"""An agent implementation with zero framework dependencies"""

def __init__(self, config: AgentConfig):

self.config = config

self.client = anthropic.Anthropic()

self.tools: dict[str, callable] = {}

def register_tool(self, name: str, description: str, schema: dict, func: callable):

"""Register a tool"""

self.tools[name] = {

"definition": {

"name": name,

"description": description,

"input_schema": schema

},

"func": func

}

def run(self, user_input: str) -> str:

"""Execute the agent loop"""

messages = [{"role": "user", "content": user_input}]

tool_defs = [t["definition"] for t in self.tools.values()]

# Agent loop: call LLM -> execute tools -> call LLM again

for _ in range(10): # Max 10 rounds to prevent infinite loops

response = self.client.messages.create(

model=self.config.model,

max_tokens=self.config.max_tokens,

system=self.config.system_prompt,

tools=tool_defs if tool_defs else None,

messages=messages

)

# If the model responds directly (no tool call), return the result

if response.stop_reason == "end_turn":

return self._extract_text(response)

# Handle tool calls

messages.append({"role": "assistant", "content": response.content})

tool_results = []

for block in response.content:

if block.type == "tool_use":

result = self.tools[block.name]["func"](**block.input)

tool_results.append({

"type": "tool_result",

"tool_use_id": block.id,

"content": str(result)

})

messages.append({"role": "user", "content": tool_results})

return "Maximum loop count reached. Please simplify your question."

def _extract_text(self, response) -> str:

return "".join(b.text for b in response.content if b.type == "text")

# Multi-agent orchestration (minimal version)

class AgentPipeline:

"""Execute multiple agents sequentially"""

def __init__(self):

self.steps: list[tuple[SimpleAgent, str]] = []

def add_step(self, agent: SimpleAgent, prompt_template: str):

self.steps.append((agent, prompt_template))

def run(self, initial_input: str) -> str:

current_output = initial_input

for agent, template in self.steps:

prompt = template.format(input=current_output)

current_output = agent.run(prompt)

return current_output

Building from scratch — strengths:

- Zero dependencies — no worrying about framework version updates breaking your code

- Full control — every line of code is yours, debugging goes straight to the source

- Best performance — no framework abstraction overhead

Building from scratch — problems:

- You have to build infrastructure yourself — error handling, retries, logging, monitoring all need custom code

- Code volume balloons for complex orchestration — conditional branching, parallel execution, state persistence require a lot of code

- Higher team collaboration cost — without unified abstractions, everyone may write agent code in different styles

Real-World Results

Actual Comparison Across Three Projects

| Dimension | Project A (LangGraph) | Project B (CrewAI) | Project C (From Scratch) |

|---|---|---|---|

| Project type | Customer service agent | Content production | Data analysis |

| Development time | 3 weeks | 1 week | 2 weeks |

| Lines of code | 2,400 | 800 | 1,600 |

| Runtime latency | 4.2s/request | 12.5s/task | 3.1s/request |

| Framework overhead | ~200ms | ~800ms | 0ms |

| Maintenance difficulty | Medium (frequent version upgrades) | Low | Medium (many custom components) |

| Extensibility | High | Medium | High (but you build it yourself) |

Note the latency numbers: CrewAI took 12.5 seconds to execute 3 sequential agent tasks, of which roughly 800ms was framework overhead (agent role initialization, internal communication protocol). LangGraph's overhead was about 200ms. Building from scratch had zero overhead.

A Framework Selection Decision Tree

After these three projects, I distilled a decision tree for framework selection:

Question 1: How complex is your agent logic?

- Simple linear flow (A -> B -> C) -> CrewAI or build from scratch

- Has conditional branches and loops -> LangGraph

- Very simple (one agent + a few tools) -> Build from scratch

Question 2: What's your team's technical level?

- Product / ops team -> CrewAI (most intuitive abstraction)

- Engineering team -> LangGraph or build from scratch

- Solo developer -> Depends on project complexity

Question 3: How much production stability do you need?

- High (customer-facing product) -> LangGraph (most mature) or build from scratch (full control)

- Medium (internal tool) -> Any of the three

- Low (prototype validation) -> CrewAI (fastest)

Question 4: How many integrations do you need?

- Many third-party integrations -> LangChain (largest ecosystem)

- Just a handful of APIs -> Build from scratch (writing tools directly is more straightforward)

My Actual Choices

In my own projects:

- Content production agent team (the project behind what you're reading right now) -> Built from scratch. The workflow is fixed, and I need precise control over every agent's prompt and output format.

- Customer demos: CrewAI. It lets you quickly build an impressive-looking multi-agent demo.

- Enterprise client production systems: LangGraph. Clients need state persistence, breakpoint recovery, and audit logs — all of which LangGraph supports out of the box.

Takeaways

Three core takeaways:

-

There's no "best" framework, only the most suitable one — selection depends on project complexity, team capabilities, and production requirements, not GitHub stars.

-

A framework's value lies in how much of it you actually use — if you're only using LangChain's ChatModel and Tool, you don't actually need LangChain. The framework's value is in its advanced features (state management, checkpoints, visualization). If you're not using those, it's pure overhead.

-

Start simple, introduce a framework only when needed — my recommendation is to get the agent logic working with the raw API first, confirm you need a specific capability that a framework provides, then bring it in. Don't pick a framework just because "everyone else is using it."

What framework are you using to build agents? What pitfalls have you hit? I'd love to hear about your experience.